Demos

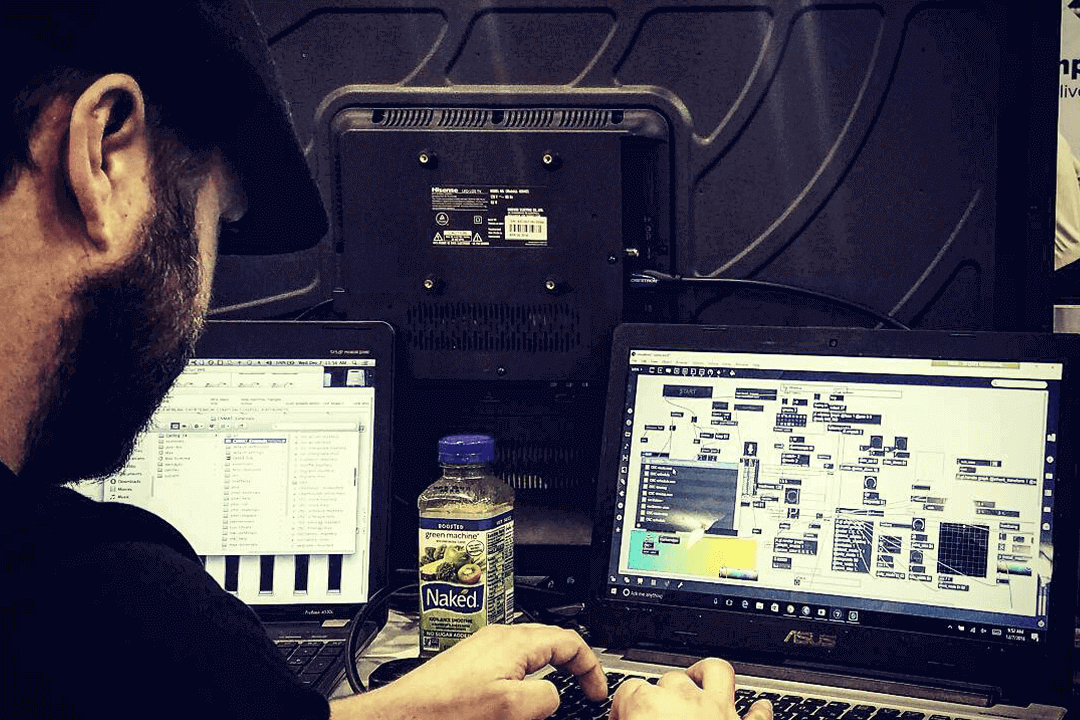

EEG Controlled Composition

I designed a generative music composition software that is fueled by cognitive thought, emotional states and facial expressions using the Emotiv EEG and Max/MSP. I made it for a grad school project and it’s just one of several. I’m using a similar piece of software to sonify dreams and communicate with people in comas.

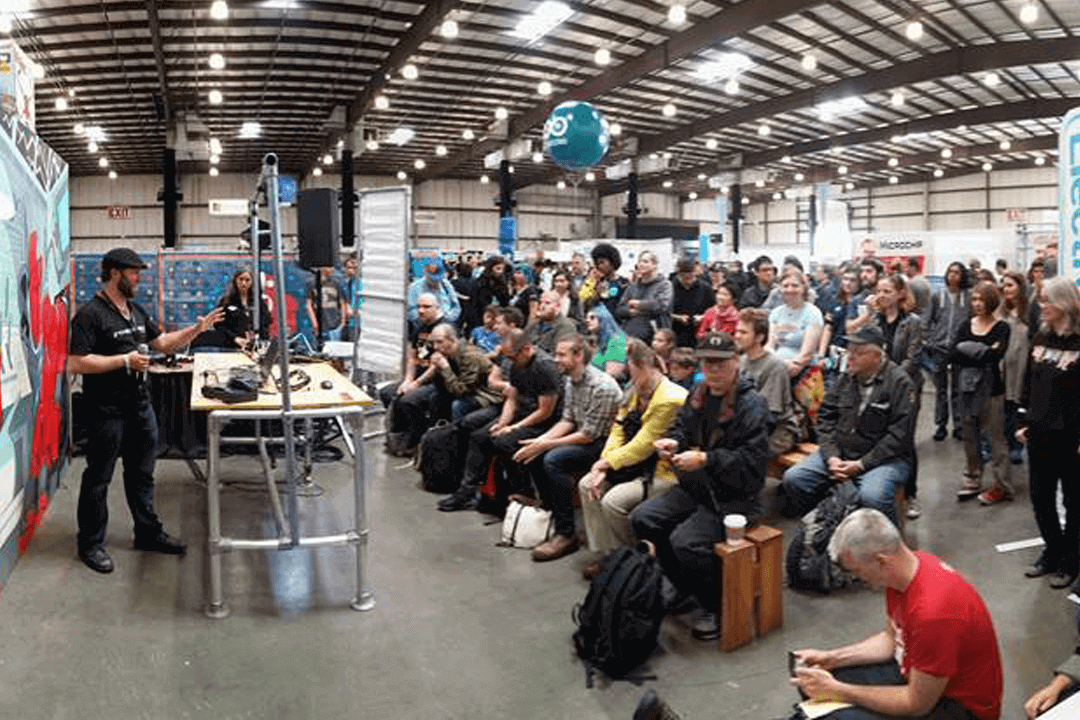

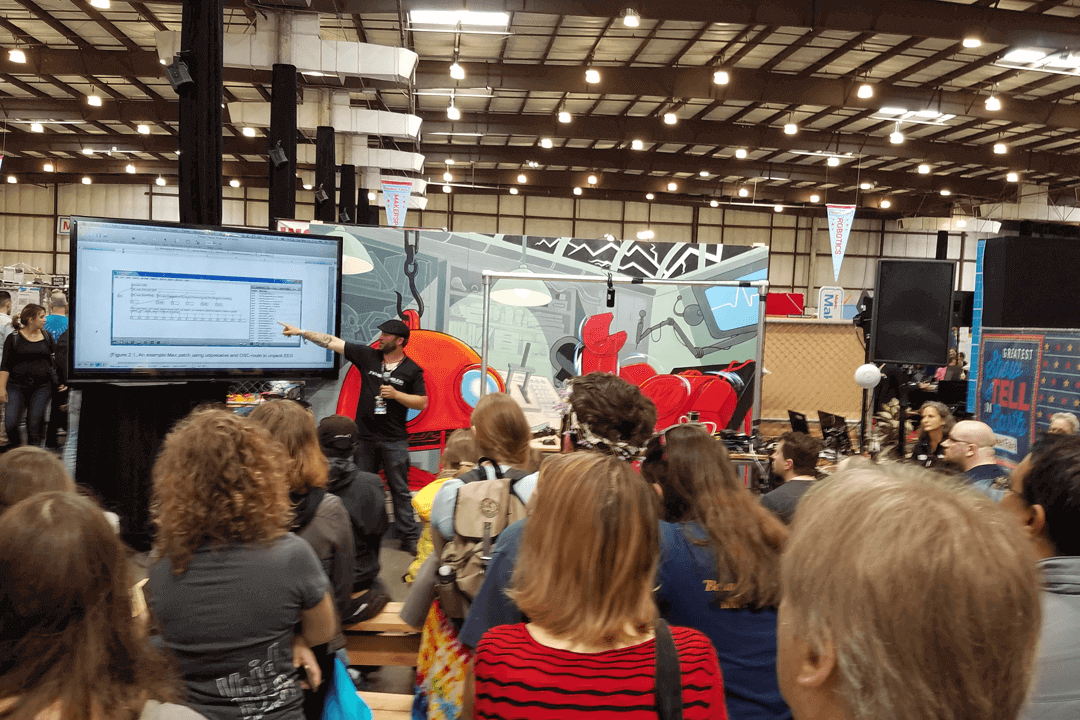

Routing The SSL AWS900 as a Midi Piano

I’ve routed the SSL console to function as a MIDI piano for use with softsynths in Pro Tools HD 8 and Nuendo 4. The buttons in the master section are the keys and the faders are the cutoff, resonance, filters etc. Ignore my crazy hair.

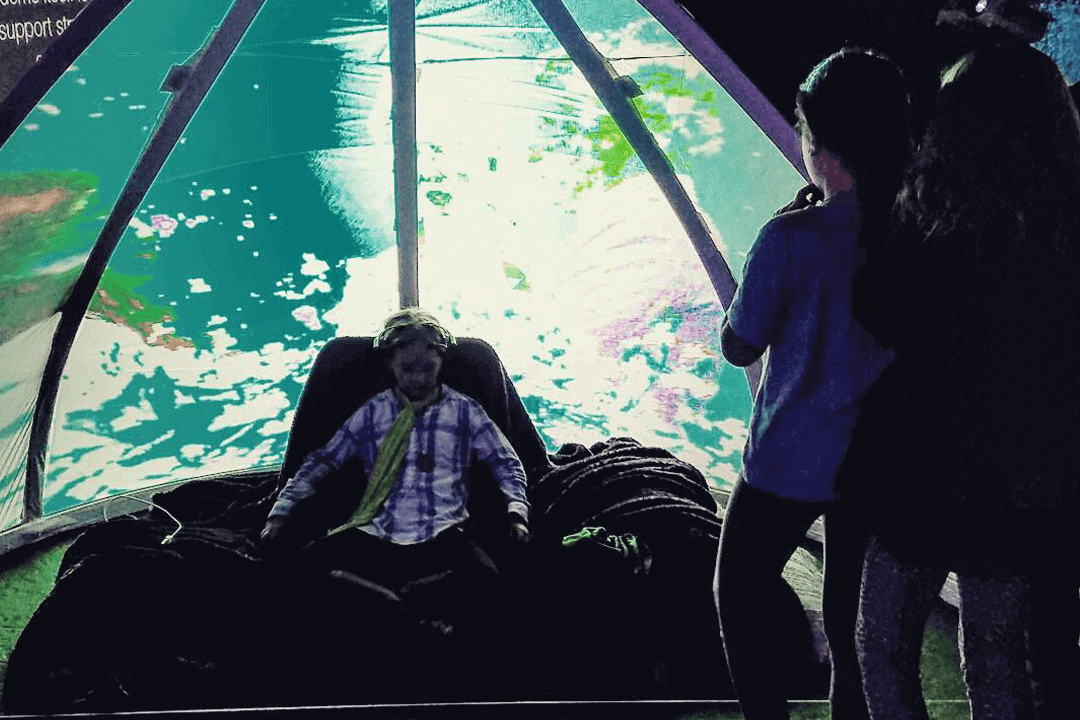

Interactive Projection Mapping

Keep in mind that I shot this in one take to see if it would work. I added the music later. I was not dancing because it was silent. I was trying to dodge the light to show that it would follow my face. The video/light projection is live in real-time. The LED in my mouth is infrared and invisible to the naked eye.

Building Cloud Computing Into a Cell Phone

Distributed processing for streaming multi channel audio manipulation through multiple voip channels via remote desktop control.

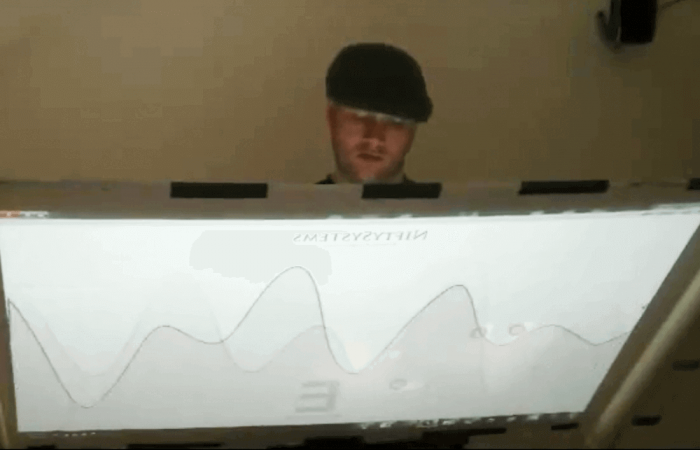

How to create your own multi-touch display

A lot of people have asked me how I made my last 2 videos. I knew that would happen so I shot a description video the same day. I have a show coming up that will be filmed so you can see how I incorporate a variety of technologies on stage

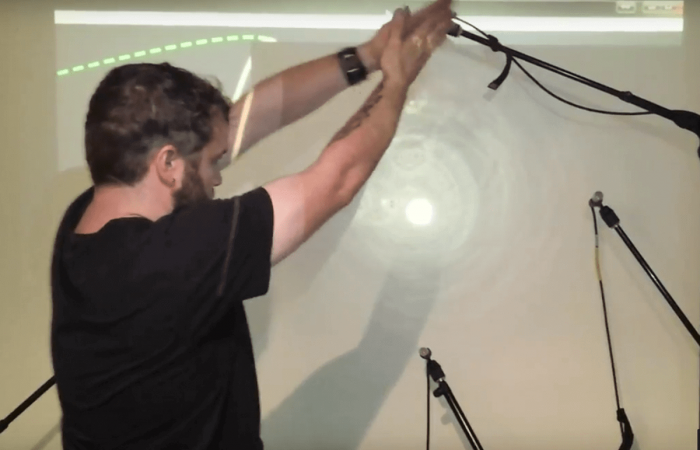

Visualizing Sound

This is a prototype proof-of-concept of using microphones to locate a sound source and display it’s location and waveform as ripples. This is what sound looks like; waves bouncing off surfaces like ripples in a pond. The larger version uses a grid of 22 electret mics installed into a projection screen. The frequency is visualized by the width of the ripples and I’ve shifted/scaled the visual spectrum down to the human hearing spectrum so that the frequencies have corresponding colors. A guitarist and a sax player could play in front of the screen and we could literally see what the sound waves look like as they bounce off the wall.